For this month’s series on PPC Hero, we’re taking a look back through our archives and revisiting some ideas we’ve talked about in the past and how the past year or more has changed our thinking about them. Things are always changing in PPC, and our views and opinions about it are no exception.

As you spend more and more time doing the same thing, it’s expected that you’ll begin to evolve in how you do it. When it comes to ad testing and ad analysis, I’ve seen my fair share of reports and run vastly differing types of analyses.

The question for this series is how has it changed over time? How has my method changed and how have I adapted to the everlasting flow of new tools and features Google has provided? The following is a breakdown of four examples of Ad Test evolution from this past year.

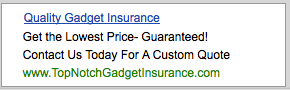

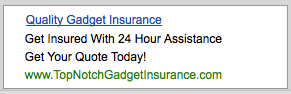

1) Content – Obviously, the content of your ad is a huge part of the battle. But how do you evaluate them? What combinations of copy do you look at? An old standard of mine is to write ads whose 3 lines function autonomously :

This allows for very straightforward testing using slightly varied duplicates- substitute new Line 1 for old Line 1. Find the winner. Substitute new Line 2 for old Line 2. Find the winner, and so on. But moving beyond that, I’ve found some new approaches that deal more with the big picture. Just as Google wants us to constantly think about our user’s experience, so have I pushed myself to understand the user’s intent.

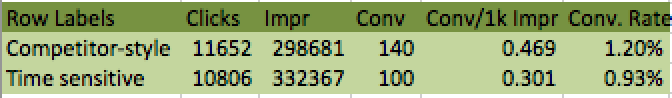

One combination I have been testing more recently is the tone of the message. This data comes from a test where the 1st ad was denoting the close of Sale season. The 2nd ad used the language common among my competitors.

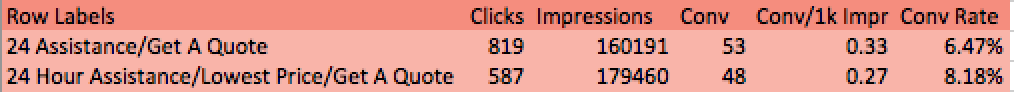

Another approach involves a combination of messaging. Before evaluating your ad copy, you need to know what lines you’ve drawn that distinguish one ad from another. Here I’ve included 1 feature and 1 Call to Action versus 2 features and 1 Call to Action.

The results I encountered showed me that the first ad’s Click-Through-Rate was higher (a surprise to me!) and that while the overall conversation rate (that is, conversions per click) was lower, the number of leads acquired per 1000 impressions was better for it, too!

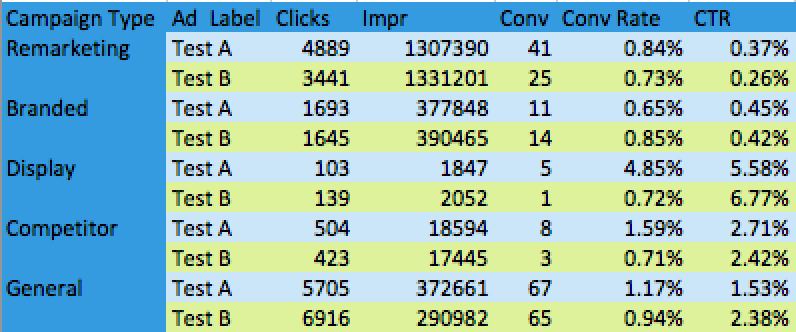

2) Campaign type segmentation – A valuable lesson to be learned is that two identical ads placed in 2 different types of campaigns may not perform equally! Branded may be skewed, as users are more likely to click on an ad when they’ve already searched for your brand. Remarketing is based on users who’ve already expressed interest and are therefor further into the process of converting. Display will likely have a lower CTR simply because of a huge impression volume that warps the click–to-impression ratio. And campaigns based on competitors may have results all across the spectrum depending on your industry, your competitors, and your bidding strategy. Below is an example of the ad test results I saw upon segmenting by campaign type, instead of simply Test A versus Test B.

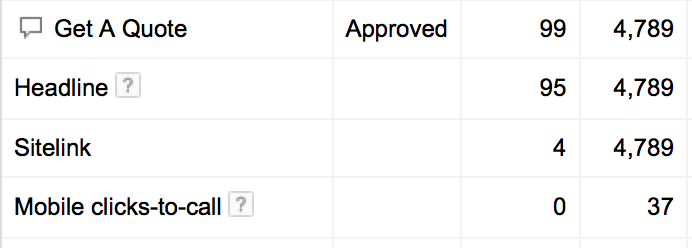

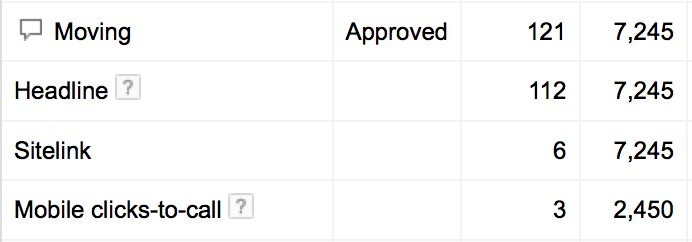

3) Sitelink performance – The Google gods gave us the gift of sitelink reports this past year, and it’s a HUGE leg-up on knowing what sitelinks actually do for your ads. Because, assuming we’ve all upgraded our extensions, you can now assign sitelinks by ad group, there are boatloads of information waiting to be evaluated. British Sam did a great job assessing this topic already, but there is so much fun to be had, I’ve included a glimpse into my extension analysis. I use call extensions offer in a portion of ads. Where I used to just guess about what extension had an impact on my user interaction, now I can see what’s truly relevant!

I get to use this report to not only identify the sitelink language that garners the most clicks, but also the success or lack thereof of the Call extension in the ad. The mobile click-to-call feature has received 3 clicks when paired with the “Moving” sitelink, as opposed to the “Get A Quote” link. Is it possible that because they don’t always see “Get A Quote” as an option, they call instead? I find this data extremely useful when trying to better target users on specific devices or in certain settings.

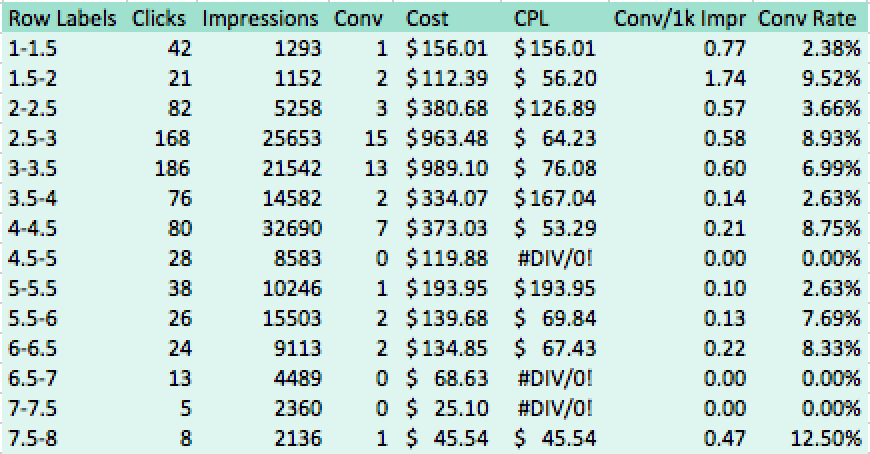

4) New approach based on old metric – What are new spins on reports we’ve been able to access this whole time? As my ad testing has helped me identify what language and tone, I’m more confident in analyzing the more concrete aspects of ads. Our own Sean Q wrote a great piece about evaluating ad performance based on position. I’ve pulled an ad report with results segmented by day to get a detailed look at how ad rank is responded to. I then created a Pivot Table where ad rank was split by 0.5 of a position. The results show that while I may be getting a portion of my impressions from ranking 2.5-3.5, I actually could pull the most conversions per thousand impressions from 1.5-2.0.

Note: Be sure to use a large amount of data as a small sample size maybe only have a limited variety of ad rank options. If you’ve only got 1000 impressions and they’re ALL split between ranking 3-4, of course you’re going to think that positions 3-4 are your best. Be sure you’ve got a large enough sample size to get a full scope of your performance!

How has your ad testing changed over the past year? Are you finding yourself in mountains of wonderful data, too? Let us know what new ideas you’ve got for utilizing your ad report data, and thanks for reading!