When it comes to success with Facebook Advertising, audience targeting is the name of the game. While other factors like quality of ad creative should not be overlooked, performance in social is largely contingent on audience creation.

The options for targeting within Facebook are seemingly endless, providing an incredible combination of both scale and accuracy. We’ve discussed a variety of these opportunities at length in the past:

- Alaina Thompson explains how to Speak To The Audiences You Value Most

- Chadd Powell provides A Primer on Facebook Remarketing and Tips For Using Facebook Lookalike Audiences

- Carrie Albright shares The 7 Things You Wish You’d Known About Facebook

So with all this incredible targeting technology, I made the odd decision to use literally none of it. I did the unthinkable and launched an audience test with zero restrictions beyond a max bid and a geotarget. Now, before you start thinking I’m terrible at my job, give me a chance to explain myself.

The Background

I recently started working with a high-profile client looking to drive App Installs. While they are an established player domestically, their market share and brand recognition are significantly lower internationally. With this in mind, we began to expand into new international markets.

Prior to launching new campaigns, I did my usual due diligence using these 3 Tips To Better Understand Your Facebook Audiences. From there I estimated audience size of targeting criterion that performed admirably in other markets. All of this information was then combined to create a layered targeting strategy with predefined testing periods. We opted for an automated CPI based bidding strategy with our max bid set at goal. The early results were extremely exciting. We were driving installs well below goal all while seeing strong retention rates. Test after test showed great results from a high-level, but this type of success actually created unexpected obstacles. The issue was that despite distinct targeting criterion, no specific segment was differentiating itself performance wise.

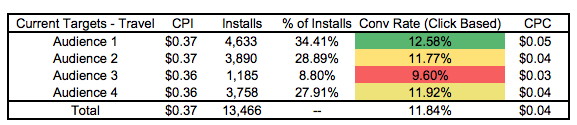

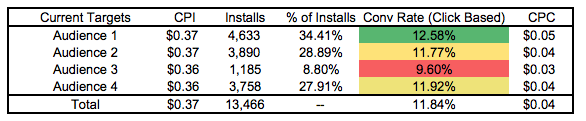

Across the board, CPI was remaining incredibly consistent. Through the first iteration of the test (shown above) we were able to declare winners based on scale and conversion rate. However, each round of testing saw similar success regardless of the specific targeting. With this in mind we arrived at two explanations:

- Our team is exceptionally talented and smart. We can do no wrong.

- Targeting is irrelevant — lack of competition, the broad appeal of our product, and the prevalence of inventory is driving success.

Now while option 1 isn’t necessarily untrue, continued testing showed that option 2 was the true explanation. Essentially, smaller audiences provided efficient performance but were difficult to scale. Large audiences were providing efficient performance while proving much easier to scale (duh, right?). Given these results we opted for an unconventional approach to achieve the largest possible audience: remove all targeting restrictions aside from geo and let the Facebook bidding algorithm go to work.

The Results

I must admit, I was a bit terrified to launch this test. We set up a host of safeguards ranging from limited budgets to extremely conservative initial bids. Theoretically speaking there was little to worry about. We did our homework ahead of time to support this decision, but launching a test this broad feels so incredibly foreign. So that said, how’d the test go?

Amazing. I proved myself to be a completely useless cog in this marketing machine. No targeting performed radically better than the audiences we previously created to generate as relevant of traffic as possible.

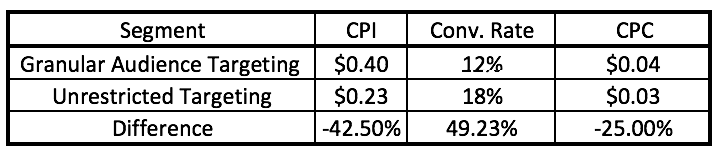

My expectation from this test was that we’d be able to scale spend better, but I did not anticipate the significant improvement in efficiency. I expected that a larger audience may yield a lower CPC and in return a lower CPI, but the 42.50% drop in CPI is better than I ever could have expected. Furthermore, conversion rate jumped from 12% to 18%. These results remained consistent even when broke down across placement and platform.

The Takeaway

So aside from proving that all of my due diligence, best practices, and marketing experience was unnecessary for this initiative, what else can be learned from this test?

Overall I think the applications of this test are a bit limited. I by no means would be willing to institute an audience of this type for one of my lead generation or e-commerce clients. This is a unique scenario where the client offers a useful, broad product with an extremely low barrier to entry in acquisition. With the combination of significant supply and low competition, success was easy to come by in this geo. Ultimately it reaffirms the message of KISS: keep it simple, stupid. When your test results are showing consistent value, don’t overthink the strategy. Rely on the data, tools, and experience you have at hand to make a defensible, informed decision.

So what are the next steps for me now that I’ve essentially removed all targeting testing possibilities? We’ll move on to generating an even stronger return with a comprehensive creative test.

Feature image courtesy of Emilio Küffer