The end of June, I wrote about the new experiment settings in Google AdWords allowing you to test different elements in your account to see if the changes really do improve performance. For instance, you can choose to lower your bids by 30% and then see if you can still maintain the same level clicks at the lower bid. Since this isn’t yet available for everyone, I thought I would follow up my original post with some additional tips I learned along the way as well as my results.

Just over a month ago, I launched several experiments testing what keyword bid differences would do to my performance. I have had many conversations with one particular client where I have been asked, “If we give you an unlimited budget, can you get more conversions?” In other words, is the market saturated and we are getting all that we can or is there an opportunity by spending more money, to get additional conversions. It is not often that a client is willing to give you as much money as you can spend, so I naturally took on the challenge – does spending more really get me more or does it just drive up costs?

The Google Experiment was the best tool for this test as it allowed me to keep tabs on the data for the “control” or for what bids were at now. What the experiment tool does is split traffic between your control group and what you are testing. So I took one campaign and set up an experiment that would raise all keyword bids by 10%. My hope was that this would place my client in a slightly higher position, allowing better visibility and more leads. My concern was that not only would I have this additional visibility, but my spend would also increase resulting in a much higher cost per lead.

I went under the “Settings tab” and set up the basics for the experiment. I ran it for a total of 30-days as this campaign typically has enough traffic that after 30-days I would have enough data to make a decision. I also set the traffic settings to a 50/50 split so 50% of my traffic went through at the control keyword bid and 50% went through with the slightly higher experiment bid.

As I went through the experiment period, I discovered a few tips that I would like to pass along:

AdWords Editor does not like experiments. If you use the AdWords Editor to make bulk changes to your account, make sure you don’t try to adjust campaigns running an experiment. The editor tool won’t let you upload your changes which is a good thing so you don’t ruin the experiment, but frustrating if you don’t know what the error message means. It will not tell you that you are running and experiment and can’t update the campaign but rather give you a general error message when you try and post changes to the account.

“AdWords Editor received an error from AdWords that it does not recognize. This probably means that the version of AdWords Editor that you are running is out-of-date; please check to see if a new version is now available. (Error number: 211)”

This does not mean you need a new version of AdWords Editor, it just means that you likely have changes to an experiment or other auto tool like conversion optimizer and AdWords Editor doesn’t know what to do. Quick solution – revert the changes you tried to make to the experiment campaign and then try and post. Everything should work just fine.

Experiments end on your end date, that is it. You don’t get a courtesy message from Google reminding you that your experiment has ended. It just stops running and all of your traffic then goes back to your “pre-experiment” settings. Don’t forget to make a note of your end date and go back to review your results.

If you don’t want to apply all the changes, you have to do them manually. So your experiment ended and you saw that increasing bids by 10% is what you should do for all your keywords. To make this change, hit the “Apply: launch changes fully” button in the experiments section on the settings tab and you are done. If you only want to apply the change to certain keywords, you have to do that manually for each keyword, similar to how you would make a bid change. At some point this may become a little easier to do (especially if it is integrated in AdWords Editor) but for now you have to do it the old fashioned way – one at a time.

Once you launch or delete the experiment changes, your data is gone. Once you make the decision to either apply all of your changes or to delete the experiment, all of the data you collected is gone. Make sure you take screen grabs in case you want to refer to it in the future.

So, how did my experiment turn out?

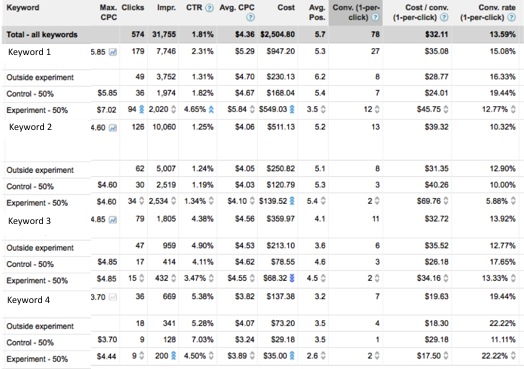

Overall the results were in line with what I suspected but the benefit is having actual data to show how the keyword performed at each of the bid levels. Overall, the 10% keyword bid increase did bring up my position slightly and for the most part that meant additional clicks but along with it came a higher spend. On some of my keywords, my click through rate also increased which I expected to see with the additional clicks.

See the experiment data below for Keyword #1. The little blue arrows next to a data point shows if the data is statistically significant, meaning the results are unlikely to have occurred by chance. For Keyword #1, you can see the increase in clicks and spend but what my client really is concerned about are the conversions. Although I gained 5 additional conversions over the control, I spent significantly more, almost doubling my cost per lead. Based on my goals for the account, the additional number of conversions are so few compared to the spend increase, that this tells me it isn’t worth increasing our bids for this campaign. We may get a few more conversions but we will spend significantly more driving up our overall cost per lead much higher than goal.

At the end of the day, the Google Experiments tool is well worth the five minutes it takes to set it up. I have several additional experiments running and am seeing slightly different results in each one – make sure you try the same experiment within different campaigns to see if the performance results are really tied to the brand as a whole or just a campaign. The more you test, the more data you will have to make sure your campaigns are performing at their best.