I’ve never really understood the hype and over the top attention afforded to quality score. I understand why search engines need to have an algorithm that determines bid prices and ad rank. However, what I can’t wrap my head around is why so many marketers are overly fixated on a metric that ultimately doesn’t have much bearing on the performance of an account from a business perspective.

Years ago, the concept of quality score was introduced as a means of quantifying the relevance of keywords and ads. From the moment QS was introduced, PPC professionals were conditioned to believe that quality score is by and far the most important metric we needed to optimize. I’ve been involved in countless conversations with clients and search marketers alike where it’s clear they treat quality score improvement as if it’s the Holy Grail of account optimization.

This post will explain why I believe quality score should be used as nothing more than a guide to account health and not something that should be optimized at the expense of meeting client goals.

Reason #1: Higher Quality Score Doesn’t Translate into Better Performance

I’ve rarely found that higher quality scores directly translates into improved performance. In most accounts I’ve managed, keywords with lower quality scores outperform keywords with higher ones.

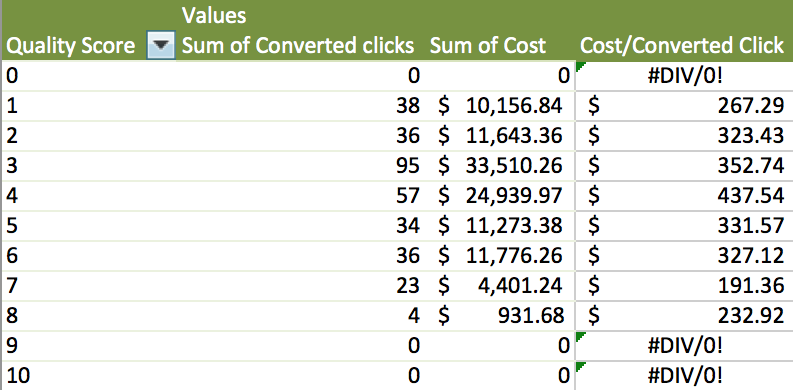

Let’s take a look at an analysis I did on an account’s performance by QS. This analysis is of cost per converted click by quality score. I pulled year to date numbers in order to include a large enough data set to make the analysis statistically significant.

In this example, keywords with a QS score of 1 have a cost per converted click of approximately $267. The cost per converted click for these keywords is cheaper than any of the keywords with the QS 2-6 range. Cost per converted click for keywords in the quality score 7-8 range are cheaper (as we would normally expect to see), but there is far less spend and conversions.

If I followed the conventional logic to its natural conclusion and optimized the keywords in the QS 1 range for a higher score, wouldn’t I be optimizing them for worse performance? Maybe/maybe not, but if I had to make the choice of prioritizing what’s going to help me reach account goals quicker, I’m going to work on improving the conversion rate of those keywords. I would expect them to yield better results quicker than running numerous ad tests and applying other optimizations just to increase the quality score of a particular keyword.

The key takeaway from this example is to analyze account performance by QS before investing a ton of time into improving scores. If the evidence doesn’t suggest there’s a reasonable chance for a game-changing shift in performance by raising quality score, focus your optimizations with other goals in mind. Look to improve conversion rate or lower costs in order to improve profitability.

Reason #2: Automated Opinions: Another Reason I’m Not a Fan of the QS Metric

Three key components make up the quality score calculation. These components are expected click thru rate, ad relevance, and landing page experience. Below is a brief breakdown I’ve created for myself to help me (and hopefully you) better understand how quality score is calculated.

- Expected Click Thru Rate: Using historical CTR% data, the search engine is assigning a quality score partly based on what a keyword’s click thru rate might be.

- Ad Relevance: How closely are your keywords and ads relating to each other? A big part of determining ad relevance is whether or not keywords are included within the ad copy, but it doesn’t factor in true relevance such as conversion rate.

- Landing Page Experience: According to an automated calculation (which I interpret as ‘automated opinion’), the search engine is determining how relevant and useful your landing page experience is.

The assumptions search engines make when determining click thru rate, ad relevance, and landing page experience are highly subjective, off base, and not transparent enough to take definitive action. Let’s take a look at a couple of examples that highlights this issue.

Example A

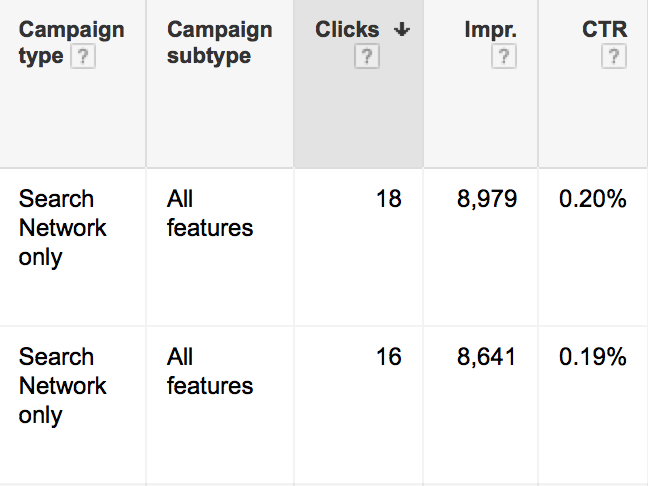

The ad group in the example above has a click thru rate of 0.19%. Ad version A’s click thru rate is 0.20% and ad version B’s is nearly identical at 0.19%.

When I run diagnostics on this ad group’s keywords, the system informs me that expected click thru rate is ‘below average’. This begs the question of what CTR% do I need to achieve in order to have an ‘average’ or ‘above average’ expected click thru rate? Is it 0.50%? Is it 2%? If I reach the ‘average’ or ‘above average’ rating, will my quality score improve?

When optimizing an account, I look for quick impact. If I chased the ‘expected CTR’ metric, I would be wasting valuable time optimizing for a metric that I have no data on. Even if I improve CTR, there’s no guarantee I’ll reach the ‘expected CTR ’ goal because I don’t know what that metric is. I rather focus on optimizations that will have a more immediate impact on account performance such as lifting conversion rate.

If I’m testing ad copy for the purpose of improving click thru rate, I’m doing it solely to bring more visitors to my landing pages so they can convert. I am not considering at all improving CTR to achieve a mysterious expected click thru rate that may or may not be a reachable goal without a projection of how much performance will improve.

Example B

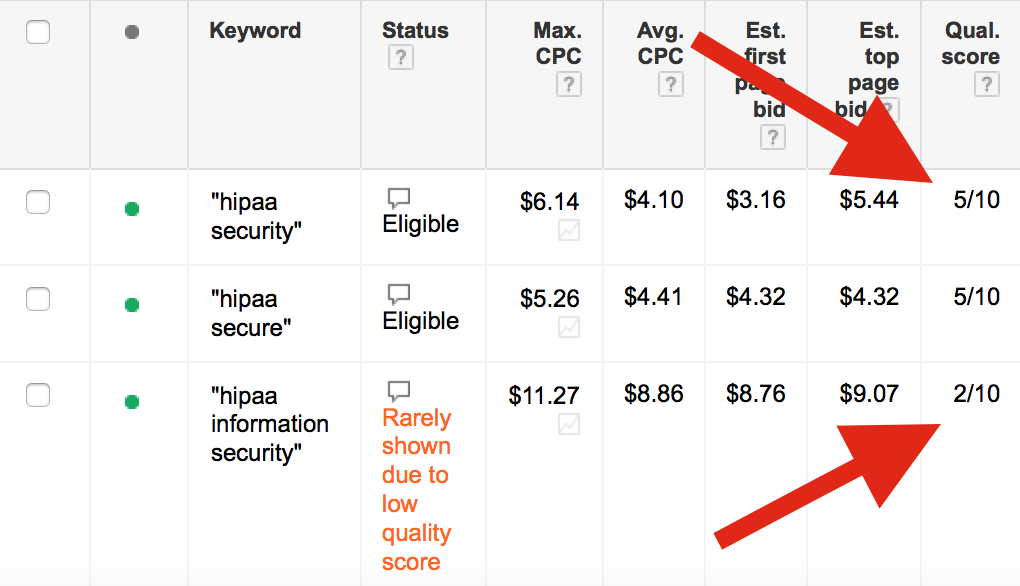

This example highlights a concern regarding how ad relevance is determined. The ad group in this example has two keywords: ‘hippa security’ and ‘hippa information security’. In my opinion, these two keywords are one in the same. The only difference between the two is the word “hippa security” does not contain the word ‘information’ in the string.

That being said, those searching on either of these keywords that have any working knowledge of the industry (IE: qualified prospects) would know that both keywords mean the same thing. Whether or not the keyword contains ‘information’ in it or not, the essence of the word ‘hippa’ is to protect the privacy of information. However, the keyword ‘hippa information security’ is deemed to have less ad relevance.

However, in reality, ‘hippa information security’ is the more targeted keyword. This keyword has been penalized although its intent is clear and the landing page associated with this keyword uses this keyword in its page content.

From this example, it appears to me that ad relevance is being associated on an automated word play that doesn’t take into account a human’s ability to discern the true meaning of a keyword, ad, or landing page’s true intent.

Example C

In Google’s official explanation of ‘landing page experience’ it states that this designation is based on a ‘number of factors’. One factor not explicitly mentioned is whether or not conversion rate plays a part in the landing page experience calculation.

A good or bad landing page experience is directly tied to conversion rate. If a site is deemed to have a ‘bad user experience’ but conversion rate is strong and achieving customer goals, than that page is actually a good user experience. On the other hand, if a site is deemed to have an optimal user experience because every best and common practice that Google looks for is followed but conversion rate is poor, then that landing page experience is really not optimal, even if Google says it is.

Until Google or any other search engine provides concrete proof that conversion rate is factored into the landing page experience metric, I find it very difficult to lend much weight to the designation Google provides.

Conclusion

We shouldn’t ignore quality score when conducting our account analysis, nor should we eliminate it. Instead, the primary focus should be on whether or not your PPC campaigns are meeting hard business goals.

Optimizing strictly for quality score usually involves pulling account levers that work counter to meeting profit and revenue goals. Plan your optimizations with the chief objective of hitting goals, even at the expense of focusing on quality score. Once those goals are met, focus on growing your account so more prospects click through to your landing pages and convert. Doing these things will reap many more benefits than simply increasing quality score.

I look forward to your comments about quality score. Most of us have strong opinions about its benefits and usefulness!